The Great Password Dilemma

Posted on Fri 17 April 2026 in Engineering Stories

We encode information every single day — and we almost never think about it.

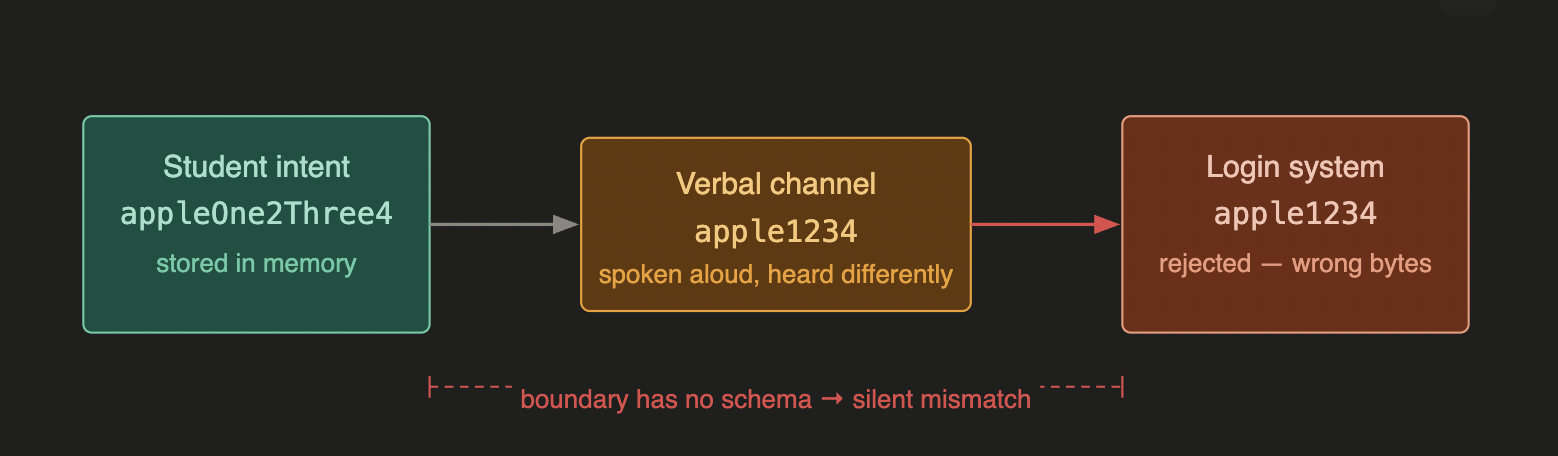

A student called me to get her account unlocked. She dictated her password over the phone: apple1234. I typed it in. Login failed. We went back and forth, both confused. Then she clarified: she meant appleOne2Three4.

Same characters. Completely different encodings.

The gap nobody talks about

What happened here is not a user error story. It is an encoding ambiguity story. The student had a mental model of her password that mixed two symbol systems — lowercase letters for the word part, and written-out numerals for the number part. When she verbalized it, she collapsed both into one spoken stream. What I heard was the numeric encoding. What she meant was the literal encoding.

This is exactly what happens at system boundaries.

Schema mismatch at the human layer — Two humans, same language, same phone call, different deserialization of the same input string. One person emitted apple1234. The other received appleOne2Three4. No error was thrown. No validation failed. The bug was invisible until the downstream system — the login form — rejected it.

The cost of ambiguous contracts — In distributed systems, we obsess over typed schemas and versioned APIs so that producers and consumers agree on what a field means, not just what it contains. "1234" and "One2Three4" are both valid strings. But only one of them is the correct password. Without a shared schema — a contract — both parties can be confident and both can be wrong.

Verbal protocols are lossy channels — Telephone, Slack voice notes, recorded instructions: these are all channels where encoding fidelity degrades. NATO phonetic alphabet exists precisely because B and D and E collapse into each other over a noisy line. Passwords, API keys, config values — anything that must survive a verbal channel needs a disambiguation protocol baked in.

Human memory stores intent, not bytes — The student was not wrong. She remembered her password perfectly. She stored the intent — a fruit word followed by the numbers one through four, spelled out. The login form stored the bytes. These are two different things, and neither party was broken. The interface between them was.

This tiny incident is a clean illustration of something I keep coming back to in agent and system design: reliability lives at the boundary, not inside the component. The student's memory was reliable. The login system was reliable. The boundary between human verbal output and system string input had no schema. That is where the failure lived.

Next time a system rejects your input, ask not what did I type wrong, but what contract did I violate, and where was that contract defined?